AI

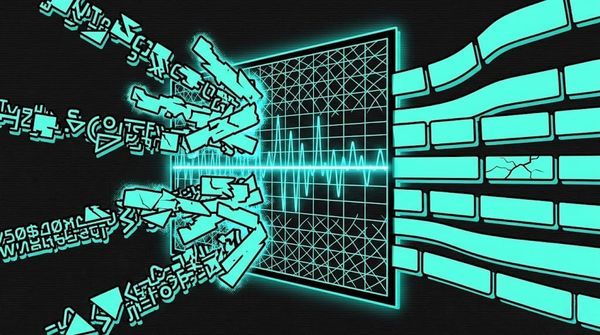

Perplexity Filtering: The First LLM Defense (And Why Evolutionary Attacks Broke It)

In the previous post on evolutionary jailbreaking, I noted that GCG suffixes are “trivially detectable by any perplexity filter.” That line hides an entire chapter of the LLM security story. Perplexity filtering was the first automated defense against jailbreaking, proposed within months of GCG’s publication [1]. For a brief